Setting up NVIDIA and CUDA Environment

This document describes how to use ocboot's setup-ai-env command to configure NVIDIA drivers, CUDA, containerd, and other AI runtime environments on an existing cluster or standalone machine, without deploying or modifying Cloudpods cloud management services. This is applicable for scenarios that require running GPU-dependent AI container applications such as Ollama.

- If the target environment does not have an Nvidia GPU, or you do not need to run GPU-dependent container AI applications like Ollama, you can skip this document.

- Without the Nvidia runtime environment configured, you can still run AI container applications that do not depend on GPU, such as OpenClaw and Dify.

Download Driver Files

Please first download and prepare the NVIDIA driver and CUDA installation packages (e.g., CUDA 12.8). Note that you should download the .run installation packages:

- NVIDIA driver: Available from NVIDIA Driver Downloads.

- CUDA installation package: Available from NVIDIA CUDA Toolkit Downloads.

After downloading, transfer the installation packages to the ocboot code directory on the machine running ocboot.sh.

The nvidia driver file and cuda installation file must be placed in the ocboot code directory, because ocboot.sh launches a buildah container to run the deployment code, and other paths on the host are not mapped into the container.

# Using rsync (recommended)

rsync -avP /path/to/nvidia/NVIDIA-Linux-x86_64-570.133.07.run target_host:/root/ocboot-master-v4.0.2-3

rsync -avP /path/to/cuda/cuda_12.8.1_570.124.06_linux.run target_host:/root/ocboot-master-v4.0.2-3

Command Format

Run ocboot.sh setup-ai-env to set up the nvidia container runtime environment on the target machine.

./ocboot.sh setup-ai-env <target_host1> [target_host2 ...] \

--nvidia-driver-installer-path /ocboot/<driver_file>.run \

--cuda-installer-path /ocboot/<cuda_file>.run \

[--gpu-device-virtual-number 2] [--user USER] [--key-file KEY] [--port PORT]

Examples

# Basic usage

./ocboot.sh setup-ai-env 10.127.222.247 \

--nvidia-driver-installer-path /ocboot/NVIDIA-Linux-x86_64-570.133.07.run \

--cuda-installer-path /ocboot/cuda_12.8.1_570.124.06_linux.run

# Specify GPU virtual number and SSH options

./ocboot.sh setup-ai-env 10.127.222.247 \

--nvidia-driver-installer-path /ocboot/NVIDIA-Linux-x86_64-570.133.07.run \

--cuda-installer-path /ocboot/cuda_12.8.1_570.124.06_linux.run \

--gpu-device-virtual-number 2 \

--user admin \

--port 2222

Parameter Reference

| Parameter | Required | Default | Description |

|---|---|---|---|

--nvidia-driver-installer-path | Yes | - | Full path to the NVIDIA driver installation package. |

--cuda-installer-path | Yes | - | Full path to the CUDA installation package. |

--gpu-device-virtual-number | No | 2 | Virtual number for NVIDIA GPU shared devices. |

--user / -u | No | root | SSH username. |

--key-file / -k | No | - | SSH private key file path. |

--port / -p | No | 22 | SSH port. |

Installation Steps and Workflow

ocboot will perform the following steps (among others) on the target host:

- Check OS support and local installation files (if paths are specified)

- Install kernel headers and development packages

- Clean up vfio-related configurations (if present)

- Install NVIDIA driver (if

--nvidia-driver-installer-pathis provided) - Install CUDA environment (if

--cuda-installer-pathis provided) - Configure GRUB (add nvidia-drm.modeset=1)

- Install NVIDIA Container Toolkit

- Configure containerd runtime

- Configure host device mappings (auto-discover NVIDIA corresponding

/dev/dri/renderD*and generate configuration) - Verify the installation

Important Notes

- During installation, the target host may automatically reboot after installing kernel packages or updating GRUB. Please be aware of this in advance.

- Ensure the target host has sufficient disk space and network connectivity to download dependencies such as the NVIDIA Container Toolkit.

- The installation packages must be prepared on the machine running ocboot, and ensure they have been transferred to the target machine before running the playbook. For transfer methods, refer to the Quick Start FAQ: How to transfer installation packages to the target machine?.

- Ensure passwordless SSH login between the machine running ocboot and the target host (or use parameters such as

--key-file).

FAQ

No GPU listed or GPU not detected in the host list after deployment?

Confirm that the NVIDIA driver and CUDA are installed on the target machine, and that the driver version matches the CUDA version. If you used run.py ai without passing --nvidia-driver-installer-path / --cuda-installer-path, you need to manually install the driver and CUDA on the target machine beforehand. After installation, run nvidia-smi to verify.

Is it normal for the target host to automatically reboot during installation?

Yes. After installing kernel-related packages or updating GRUB, ocboot may trigger a reboot to load new drivers or configurations. Do not interrupt the process. If the deployment is not complete after the reboot, re-run the original deployment command to continue.

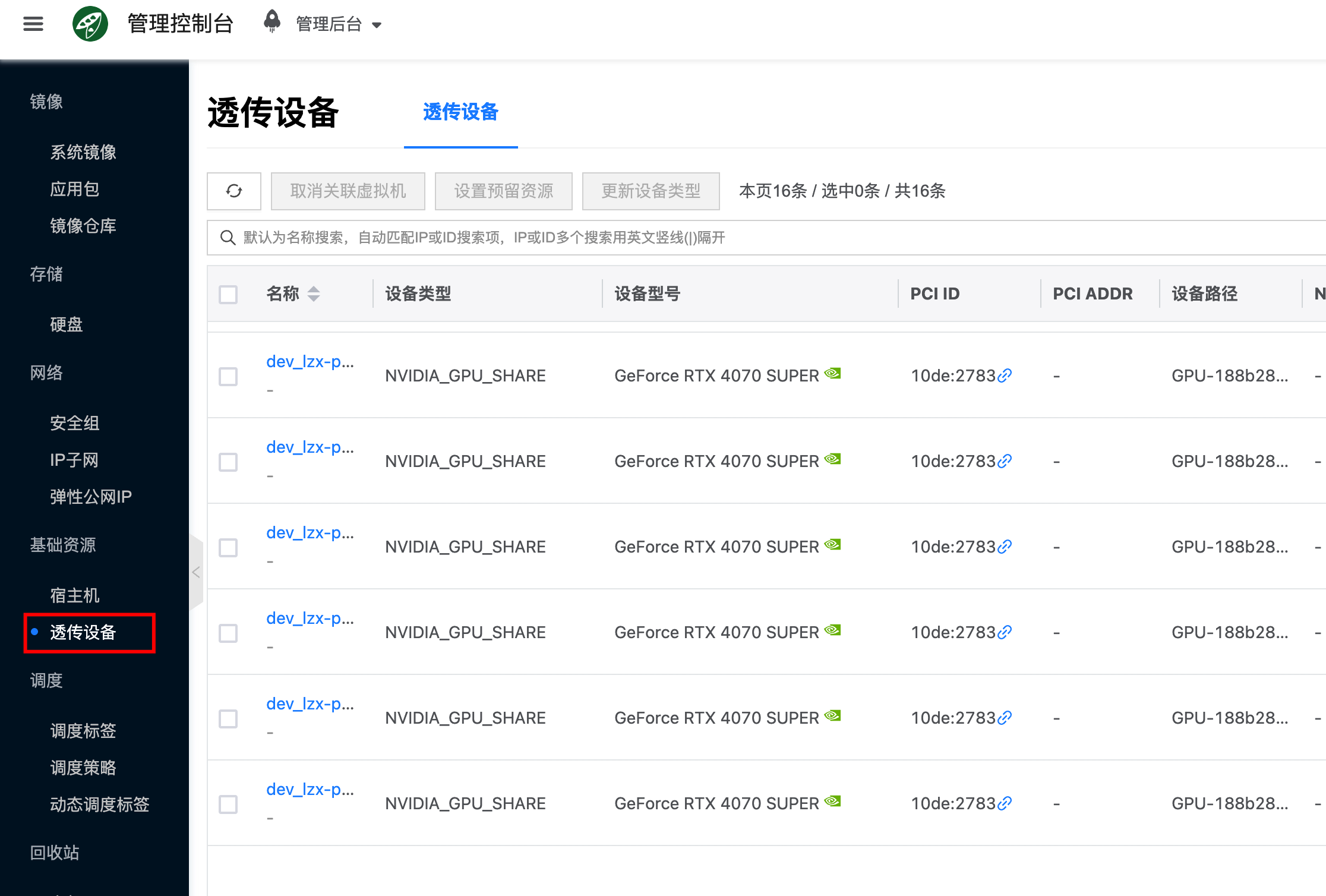

How to check if the platform has GPUs available?

If the configuration is successful, go to Compute -> Infrastructure -> Passthrough Devices to see the detected and reported GPUs.